Elon Musk has just shared the full vision for xAI — and it is not what most people expected. This is not simply about making Grok smarter or launching better image models. This is about building the compute infrastructure needed to understand the universe itself, scaling from massive Earth-based data centers to orbital clusters, Moon-based factories, mass drivers launching AI satellites into deep space, and eventually harnessing a meaningful fraction of the Sun’s energy.

xAI is only two and a half years old. In that short time the company has already reached number one in several key areas: voice realism, image and video generation volume (Imagine is now producing more content than all competitors combined), forecasting accuracy, and large-scale training clusters.

But the real story Elon laid out goes much further — into territory that feels like science fiction becoming engineering reality.

Let’s break down the entire presentation step by step, look at the achievements so far, the new company structure, the insane compute scaling plan, the scientific breakthroughs already happening, and why this might be the most ambitious roadmap ever announced in AI.

Where xAI Stands Today – Only 2.5 Years In

Elon started by reminding everyone how young the company really is. “xAI is basically a toddler,” he said. Competitors have been around 5, 10, even 20 years, with much larger teams and far more starting resources. Yet xAI has already achieved number one positions in multiple arenas.

Key accomplishments highlighted:

- Grok models lead in voice realism and naturalness.

- Imagine (image and video generation) is now generating more content than every other provider combined — roughly 6 billion images and 50 million videos per month.

- Forecasting model (Grok 420) outperformed every other AI on predictive accuracy benchmarks.

- Grok app and deep integration into X (formerly Twitter) — radical improvements to the core X experience.

- Grokpedia launched — already at ~6 million articles, on track to become orders of magnitude more comprehensive than Wikipedia, including video, images, and real-time updates.

- First company to train on a 100,000 H100 GPU cluster — now scaling to 1 million H100-equivalent GPUs.

The central message: It is not about where you are today — it is about velocity and acceleration. If you are moving faster than everyone else, you will lead. And right now, no one is close to xAI’s speed.

New Company Structure – Reorganizing for Massive Scale

As xAI has grown from a small startup to several hundred people, the structure had to change. Elon compared it to biological growth: single cell → blob of cells → organ differentiation → full organism.

New organization splits into four main application areas + infrastructure:

- Grok Main & Voice Core foundation model + voice stack merged into one team. Already live in over 2 million Teslas, Grok voice agent API available, leading in natural voice realism. Goal: become the “everything portal” — legal questions, slide decks, puzzles, engineering problems, medicine — anything useful for understanding the universe.

- Coding-Specific Model Dedicated team pushing recursive self-improvement in coding. Grok Code already accelerating internal development dramatically. Prediction from Elon: by end of 2026, most coding may bypass traditional compilers — AI will generate optimized binaries directly from intent.

- Image & Video – Imagine Started from zero six months ago → now #1 in generation volume. Goal: generate 10–20 minute coherent videos in one shot, then real-time interactive worlds (metaverse before Meta). Rendering in real time so users can imagine, build, and interact with fully responsive digital worlds.

- Macrohard Full digital emulation of entire companies (digital output only). Elon called this potentially the most important long-term project — AI running entire digital organizations end-to-end.

Infrastructure layer powers everything: training, inference, tooling, supercomputer build in Memphis (gigawatt-scale power, largest Tesla Megapack deployment in the world).

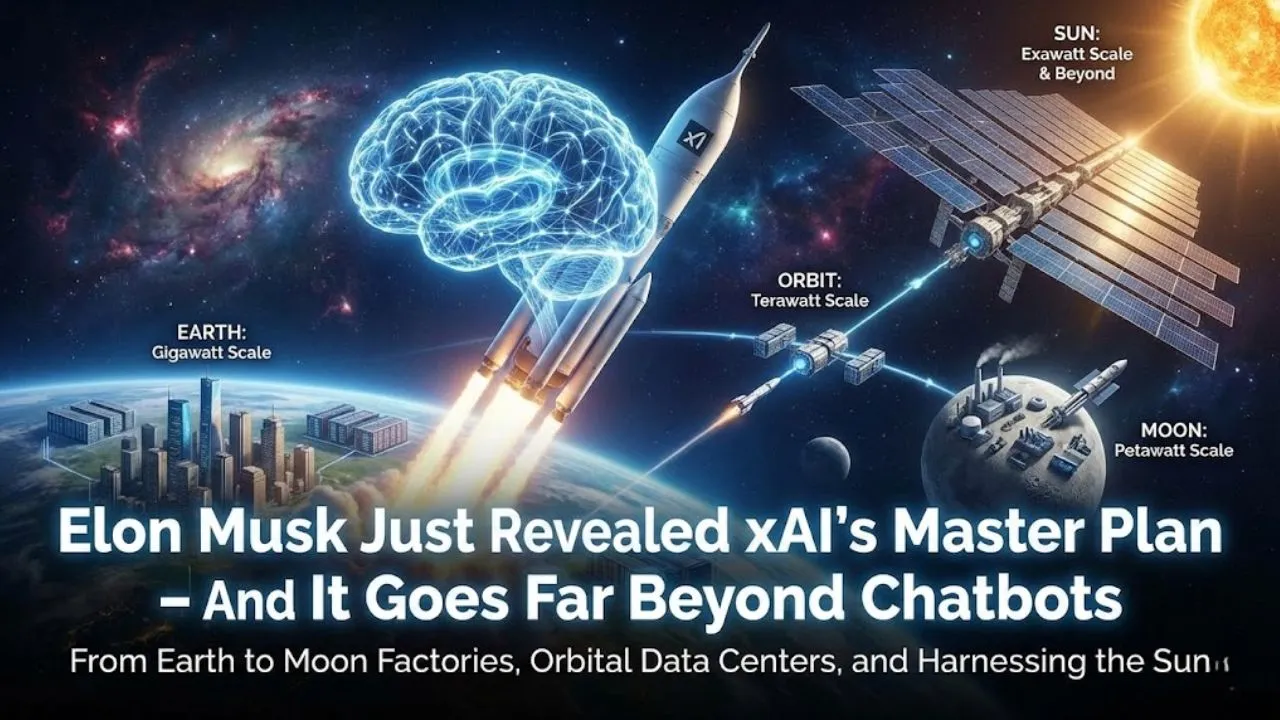

The Compute Scaling Roadmap – From Earth to the Stars

The biggest revelation was the long-term compute plan. Elon explained that civilization currently uses roughly 1% of Earth’s potential energy. To train models at meaningful scale — or even to make a dent in using the Sun’s energy — humanity must expand off-planet.

Phases:

- Earth Already at 100,000 H100s → scaling to 1 million H100-equivalent GPUs. Memphis supercluster will reach gigawatt-scale power.

- Orbital Data Centers Launching with SpaceX at 100–200 gigawatts per year (not cumulative). Long-term goal: terawatt-per-year scale from orbit.

- Moon Factories + Mass Driver Build factories on the Moon producing AI satellites. Mass driver (electromagnetic railgun) launches them into deep space. This unlocks thousands of gigawatts per year — orders of magnitude beyond Earth limits.

- Long-Term Vision Harness a millionth, then thousandth, then a few percent of the Sun’s energy. The Sun is 99.8% of the solar system’s mass. Earth is a tiny dust mote — the only way to access serious energy is to leave the planet.

Real Scientific Impact Already Happening

Google and xAI teams showed concrete examples of Deep Think / Lethia in action:

- Mathematician Lisa Carbone (Rutgers) used it to spot a critical error in a peer-reviewed paper on infinite-dimensional algebra — rewrote the proposition correctly.

- Wang Lab achieved record 130-micron 2D semiconductor growth using Deep Think’s thermal profile recipe.

- Anopam Path (Google) iterated physical designs (turbine blades) faster than ever — AI suggested shapes he hadn’t considered.

These are not demos — real researchers are using the system to accelerate actual work.

Read Also:- GOOGLE JUST RELEASED THE SMARTEST AI MODEL IN THE WORLD (AND NOBODY’S TALKING ABOUT IT)

Lethia – The Autonomous Research Agent

Built on Deep Think, Lethia can:

- Pick open problems

- Solve them

- Write full research papers

- Submit to journals

Results so far:

- Autonomously wrote a paper on weights in arithmetic geometry → submitted to journal.

- From Erdos conjectures database (700 unsolved problems) → solved 4 completely on its own.

- One unsolved problem led to a broader generalization — human mathematicians published a paper building on Lethia’s finding.

Google created a classification table for AI research contributions:

- Level 0–1: basic help

- Level 2: publishable research (Lethia already here multiple times)

- Level 3–4: landmark breakthroughs (still empty — no AI has solved a Millennium Prize problem yet)

Lethia is already delivering Level 2 results — some fully autonomous.

The Bigger Picture – Recursive Self-Improvement & Beyond

xAI’s path is clear:

- Recursive self-improvement in coding (Grok Code training next Grok Code)

- Exponential takeoff in video generation (10–20 minute coherent videos soon, real-time interactive worlds later)

- Macrohard emulating entire digital companies

- Compute scaling from 1 million GPUs on Earth → terawatts from orbit → millions of gigawatts via Moon factories and mass drivers

- Ultimate goal: understand the universe by exploring it — extending consciousness to the stars

Elon’s final point: Earth is a tiny dust mote in vast darkness. To understand the universe, we must go out there. To power that understanding, we need energy at scales only possible off-planet.

This is not hype. The benchmarks, the real lab examples, the Memphis gigawatt cluster, the orbital and lunar plans — all of it is actively being executed.

What do you think — is xAI’s roadmap the most ambitious in AI history? Or just classic Elon vision? Drop your thoughts in the comments.

Disclaimer This article is based on Elon Musk’s public statements, xAI team presentations, benchmark data, and related announcements as of February 2026. Some timelines, compute scales, and Lethia results are forward-looking or early/preview. AI progress moves extremely fast — always verify latest from official xAI channels, X posts, or grok.com. This is not investment, technical, or career advice.